AMD CEO Dr. Lisa Su At The Company’s Financial Analyst Day 2025 Discusses AI Accelerator Roadmap

Dave Altavilla

At AMD’s Financial Analyst Day in New York this week, the company underscored a clear message that the AI era isn’t just expanding the demand for compute, it’s redefining it. And AMD believes it’s positioned to gain meaningful share as AI training and inference reshapes data center architectures, client PCs, intelligent edge devices and embedded systems.

With a tee-up from its amazingly accomplished CEO Dr. Lisa Su, AMD execs outlined a long-term growth model that calls for more than 35% compound annual revenue growth at the company level and over a 60% CAGR in its profitable data center business over the next three to five years. AMD also reiterated its view that AI-driven infrastructure will help push the broader data-center compute market toward a 1 Trillion-dollar opportunity by 2030. The company anchored these projections in explicit long-term revenue targets, and the framework sets clear expectations. AMD sees itself as a central innovator and player in the next major wave of accelerated computing.

The event focused on how AMD plans to achieve this through deeper integration across CPUs, GPUs, networking technologies, adaptive computing, rack-scale architecture and a steadily improving software enablement stack. It’s a confident, cohesive strategy that reflects just how dramatically AMD has transformed its position in the industry in recent years.

Data Center Is AMD’s Growth And Profit Engine

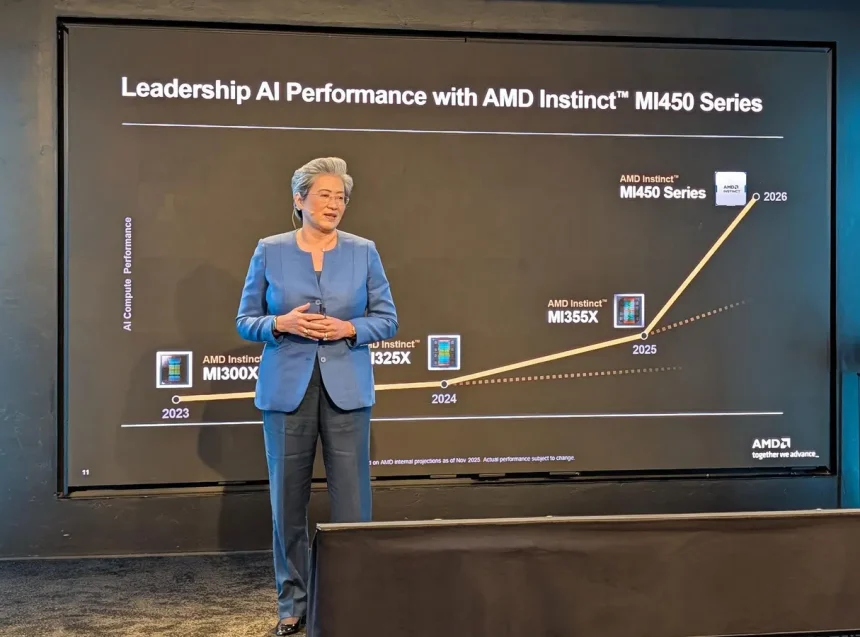

Data centers remain the foundation of AMD’s growth engine, and its Financial Analyst Day put that squarely into focus. AMD highlighted the strong ramp of its Instinct MI300 series accelerators and mapped out a detailed AI GPU roadmap through 2027. MI350 is shipping now, with MI450 arriving in AMD’s Helios rack-scale systems in the second half of 2026 and MI500 following in 2027.

AMD’s AI Accelerator Rack Scale Systems, Helios And Next-Gen

AMD

Full rack-scale design is becoming increasingly critical. Hyperscalers are no longer buying chips, they’re buying integrated AI clusters where GPUs, CPUs, intelligent network cards, fabrics, memory and software work as a unified platform. AMD’s Helios rack system designs, with up to 72 liquid-cooled MI450 GPUs, are built to compete at this level, where performance, density and total cost of ownership increasingly determine purchasing decisions. It’s important to note, however, that AMD will not be selling rack-level products directly, but will be relying on partner OEMs and its manufacturing partner Sanmina to deliver these full rack systems to its enterprise and cloud data center customers.

AMD Senior Vice President and General Manager, Compute & Enterprise AI, Dan McNamara

Dave Altavilla

On the CPU front, EPYC continues to be a critical pillar. AI clusters still depend on high-performance CPUs for data preparation, orchestration and inference scheduling. AMD’s next-generation EPYC “Venice” Zen 6 processors will target this workload mix with improvements in efficiency and thread density. The company reiterated its goal of driving server CPU revenue share above 50%, a target that would represent a decisive shift in the long-standing AMD–Intel competitive dynamic in data center servers.

Networking and adaptive computing are also becoming more central to AMD’s platform. Pensando DPUs, smart NICs and upcoming AI-optimized networking silicon are designed to relieve interconnect bottlenecks that increasingly limit AI cluster utilization and throughput.

Meanwhile, software remains a critical part of the equation. AMD noted roughly 10× year-over-year growth in ROCm downloads, a sign of increasing developer engagement with its AI accelerators as the ecosystem matures. ROCm still trails Nvidia’s CUDA in maturity and breadth, but this adoption curve suggests AMD’s investments are bearing fruit.

AI PCs And AMD’s Client Opportunity

While data center drew most of the spotlight, AMD emphasized that the client PC market is also at a turning point with the rise of AI-enabled systems. Ryzen now powers more than 250 PC platforms and the company is on track for north of $14B in PC Client and Gaming revenue for 2025. Further, AMD’s upcoming “Gorgon” (early 2026) and “Medusa” (early 2027) chip architectures were tipped with the promise of significant generational gains in on-device AI compute.

For consumers, this translates to faster content creation, accelerated photo and video workflows and improved productivity. For businesses, AMD expects AI PCs to play a key role in upcoming refresh cycles as organizations evaluate local, on-device AI capabilities for security, collaboration and analytics.

As AI PCs truly evolve into a meaningful new category, rather than a short-lived marketing buzz term, AMD should be positioned to gain incremental share in both consumer and commercial markets.

AMD Semi-Custom And Embedded: Revenue Stability And Robotics Growth

Beyond its AI-centric roadmap, AMD’s semi-custom and embedded businesses continue to provide both revenue diversification and strategic flexibility. AMD’s semi-custom business remains anchored by console platforms, but the company is also expanding into custom silicon for handheld gaming and other immersive devices. These programs tend to offer predictable revenue and longer product life cycles.

Embedded and adaptive computing are equally important as they move deeper into industrial automation, automotive systems, aerospace, networking and edge AI deployments. These markets may not deliver the rapid growth of AI accelerators, but they offer key revenue diversification. Since 2022, AMD claims it has amassed north of $36 billion in embedded compute design wins, with new semi-custom silicon opportunities expanding its embedded design portfolio another $15 billion from there, with a projected 21% CAGR moving forward.

AMD’s Robotics Solutions To Address A Projected $200B TAM By 2035

AMD

Another area AMD has had its sights targeted on for some time now is physical AI, aka robotics. AMD sees robotics as a fast-expanding frontier where its mix of CPUs, GPUs and adaptive compute solutions can play an outsized role. Industrial automation, warehouse logistics, surgical robotics, and next-gen autonomous systems all require a blend of real-time control, sensor fusion, edge inference and safety-certified compute—areas where AMD’s embedded and adaptive SoCs are already gaining traction. As more robotics workloads shift to on-device AI and deterministic acceleration, AMD’s FPGA-based platforms and embedded X86 architectures position the company to capture design wins in factories, labs and autonomous machines. While still in its early stages, robotics represents a meaningful adjacent market opportunity that the company pegs at a cool $200 billion by 2035, and it aligns directly with AMD’s strategy of extending AI from the data center to the edge.

Together, its semicustom and embedded segments help smooth volatility across AMD’s higher-growth businesses while ensuring the company maintains a presence in markets that require long-lifecycle, adaptable, customizable silicon solutions.

AMD’s Target Market Impact: Ambitious And Increasingly More Credible

AMD’s Financial Analyst Day 2025 painted a picture of a company coming into its strengths at exactly the moment when AI demand is reshaping the computing landscape. The growth projections are undeniably aggressive, and competition from Nvidia, Intel and bespoke hyperscaler-designed silicon remains intense. On a side note, I’m a technology analyst, but I must admit I think some headline-grabbing numbers floating around post event—particularly those circulating in media coverage—stem more from interpretation versus explicit AMD guidance.

AMD CTO Mark Papermaster Discusses The Major Blocks AMD Technologies Play In Its Helios GPU Rack

Dave Altavilla

Even so, the company’s recent execution gives its roadmap and projections plenty of credibility. EPYC continues to gain share versus Intel. Instinct MI355X and MI450 have secured real wins with major hyperscalers like Oracle, in addition to AMD’s long term partnership with OpenAI. Ryzen remains aggressively competitive across consumer and commercial PCs. And ROCm’s developer momentum is a big step forward for AMD’s software enablement story.

If AMD can continue expanding EPYC server share, translate MI3XX and 4XX traction into more impactful AI accelerator share, advance ROCm adoption and capture a larger portion of the AI PC refresh, the financial impact over the next several years could indeed be substantial.

Analyst Day 2025 ultimately showcased a company confident in its roadmap and clear about where the market is heading. The AI era is accelerating, and AMD appears increasingly well-positioned to play a larger role in shaping it.

Dave Altavilla co-founded and is principal analyst at HotTech Vision And Analysis, a tech industry analyst firm specializing in consulting, test validation and go-to-market strategies for major chip and system OEMs. Like all analyst firms, HTVA provides paid services, research and consulting to many chip manufacturers and system OEMs, including companies mentioned in this article. However, this does not influence his unbiased, objective coverage.